Now, let’s use the Web Developer tool in our browser to further inspect the HTML portion of these posts. We can see that each Sale is displayed with a big header which contains all the info we want to know, the item and the price:

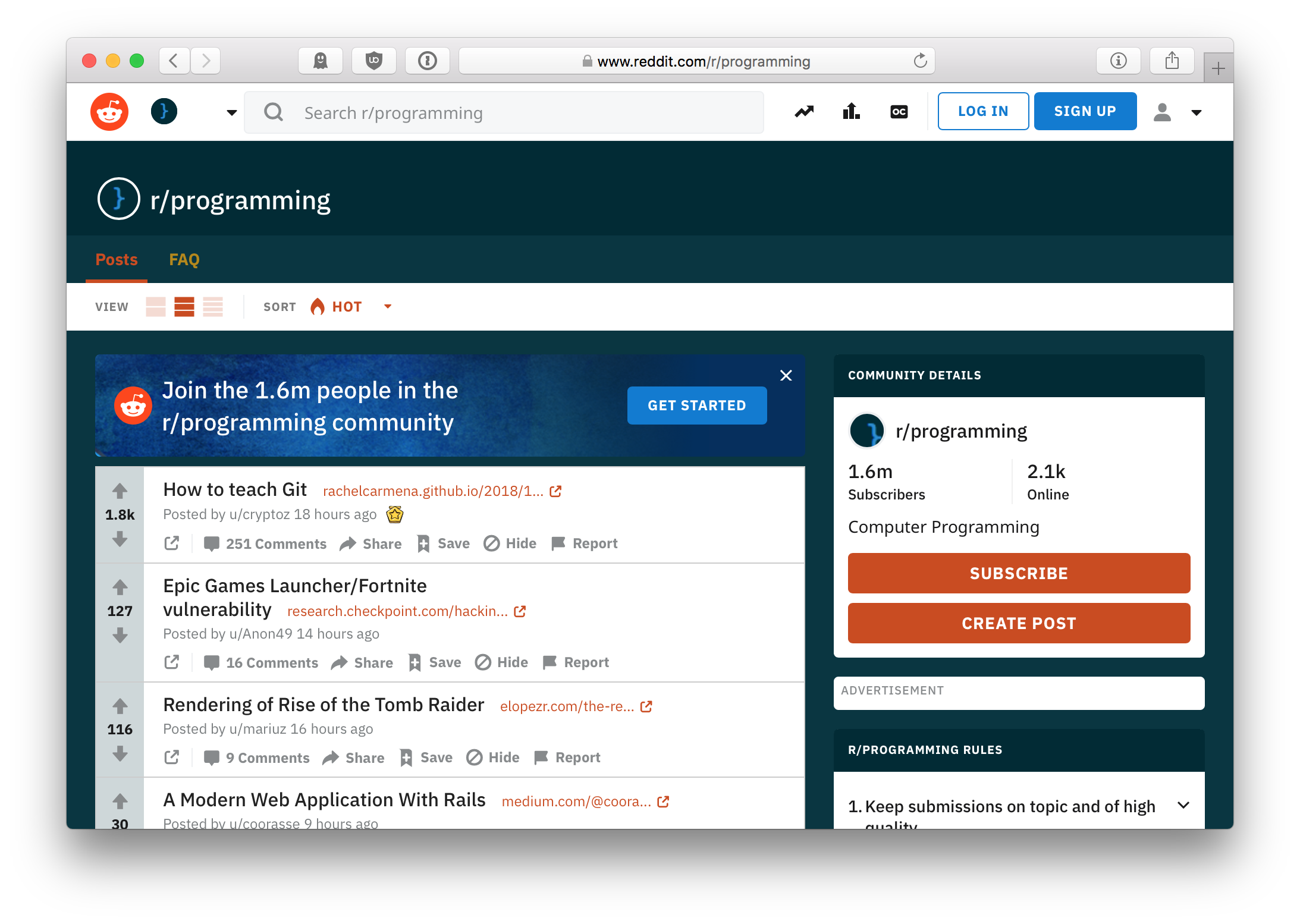

Let’s take a look at the structure of BuildAPCSales.

Web Scraping is an art since many websites are structured differently, we will need to look at the way the HTML is structured and use PowerShell to parse through the HTML to gather the info we are looking for. Web Scraping with Invoke-WebRequestįirst, we need to take a look at how the website is structured. I know there is a Reddit API available that we could use to interface with, but for the purpose of demonstrating making a web scraping tool we are not going to use it. Also, because of the limited amount of stock for some of these sales, it would be extremely beneficial to know about these deals as soon as they are posted.

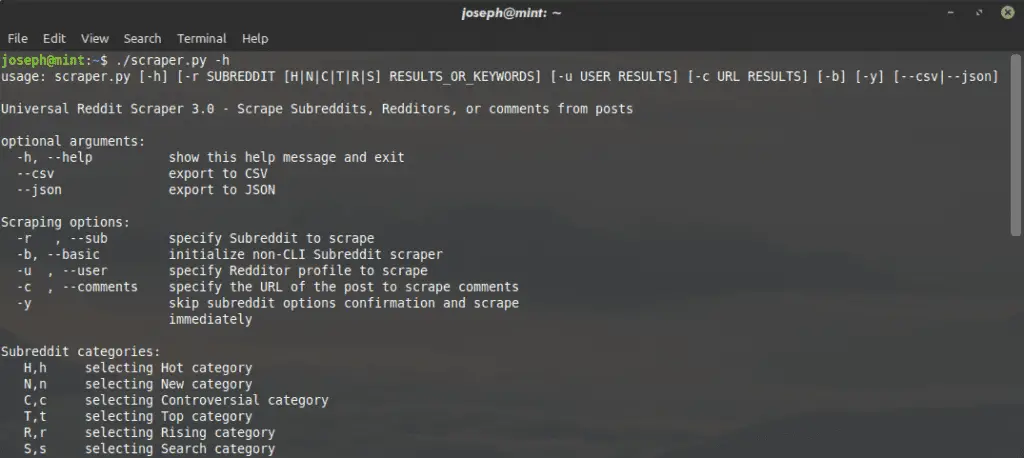

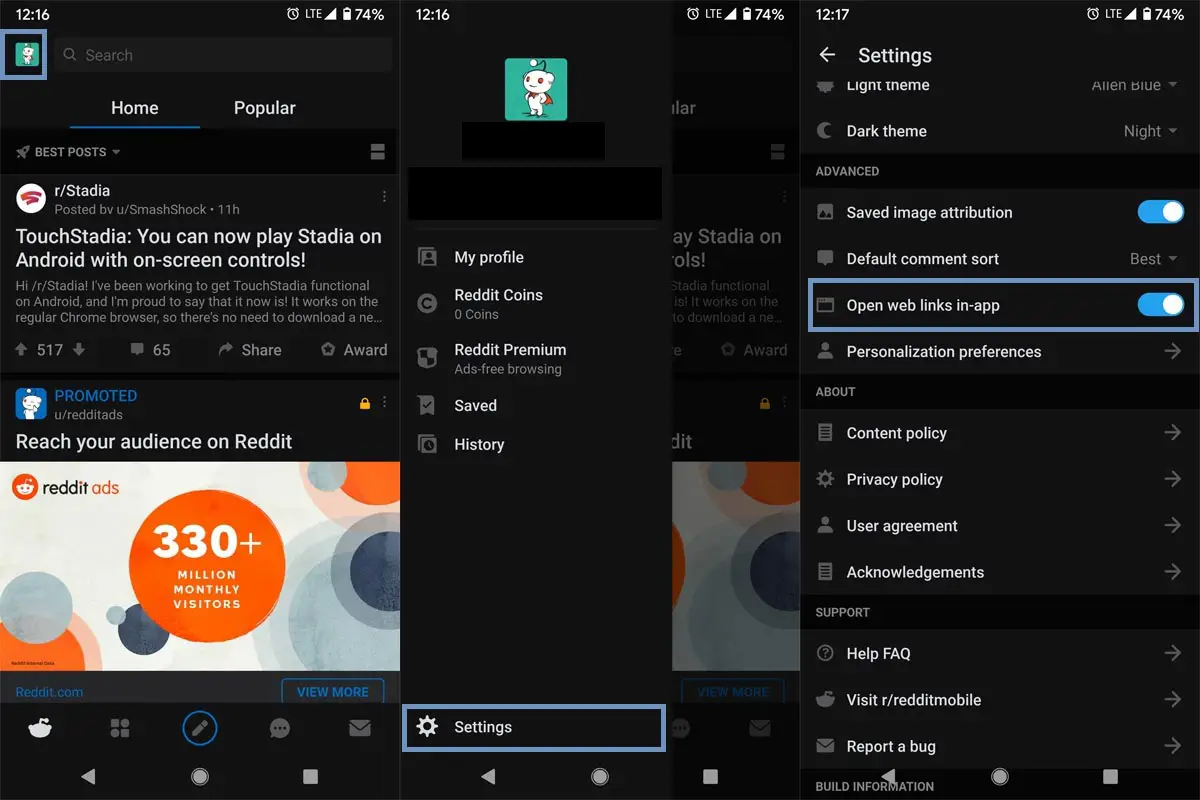

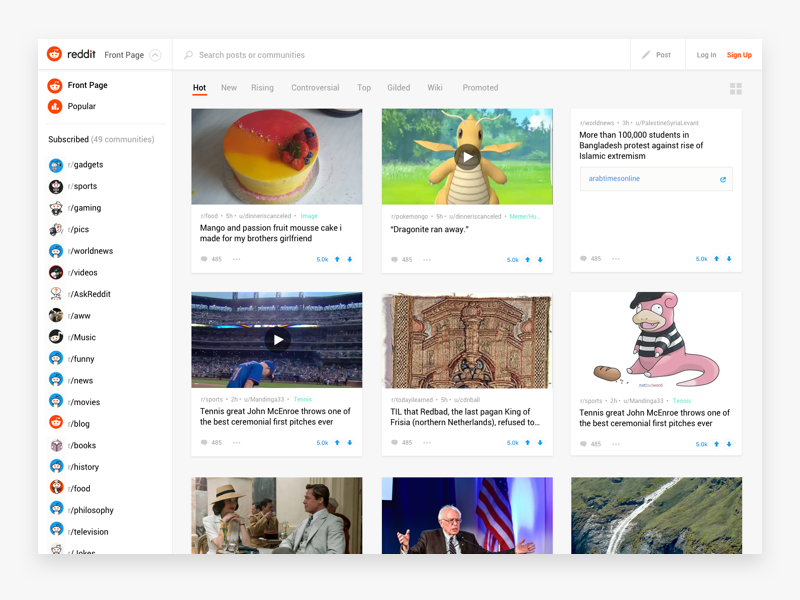

REDDIT WEBSCRAPER PCAs an avid gamer such as myself this would be extremely useful to check routinely and report back on any deals for the PC parts I’m looking for. This is an extremely useful web page as many users contribute to posting the latest deals on PC parts. We are going to scrape the BuildAPCSales subreddit. REDDIT WEBSCRAPER HOW TOToday I’m going to show you how to build your own Web Scraping tool using PowerShell. This can be a huge time saver for instances where collecting and reporting on data from a web page can save employees or clients hundreds of hours. However, there are other tricks we can use with PowerShell to automate the collection and processing of a web pages contents. Sometimes there isn’t always an API or PowerShell cmdlet available for interfacing with a web page. This tutorial addressed authentication, retrieving the most popular weekly, monthly, and yearly posts from a subreddit, as well as extracting the post’s comments.Building a web scraping tool can be incredibly useful for MSPs. The API can be used for web scraping, bot creation, and other purposes. Praw is a Python wrapper for the Reddit API, allowing us to use the Reddit API with a straightforward Python interface. Have a look at all the 44 comments extracted for the post in the following image. Print("Number of Comments : ",comments_df.shape) Submission = reddit_authorized.submission(url=url)Ĭomments_df = pd.DataFrame(post_comments, columns=) REDDIT WEBSCRAPER INSTALLBefore importing the PRAW library, we must install PRAW by executing the following line at the command prompt: We will begin by importing all required modules and libraries into the program file. This part will explain everything you must do to obtain the data that this tutorial aims to obtain. Now that the authentication phase is complete, we will be moving on to the implementation of the Reddit scraper in the next step.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed